In critical infrastructure and operational technology (OT) environments like power grids, water treatment plants, manufacturing lines, pipelines, and transportation control systems, exposure management is all about constantly finding, checking, and lowering the attack surface that could cause physical disruptions, safety problems, or widespread outages.

Exposures here often hide in old programmable logic controllers (PLCs), remote terminal units (RTUS), human-machine interfaces (HMIs), weak separation between enterprise IT and OT layers, misconfigured remote access, or small differences in industrial protocols like Modbus, DNP3, or OPC UA.

These systems need to be up and running almost all the time, therefore any way of testing them that uses active probes could cause delays in control loops, safety interlocks to trip by mistake, or modifications to processes that were not meant to happen.

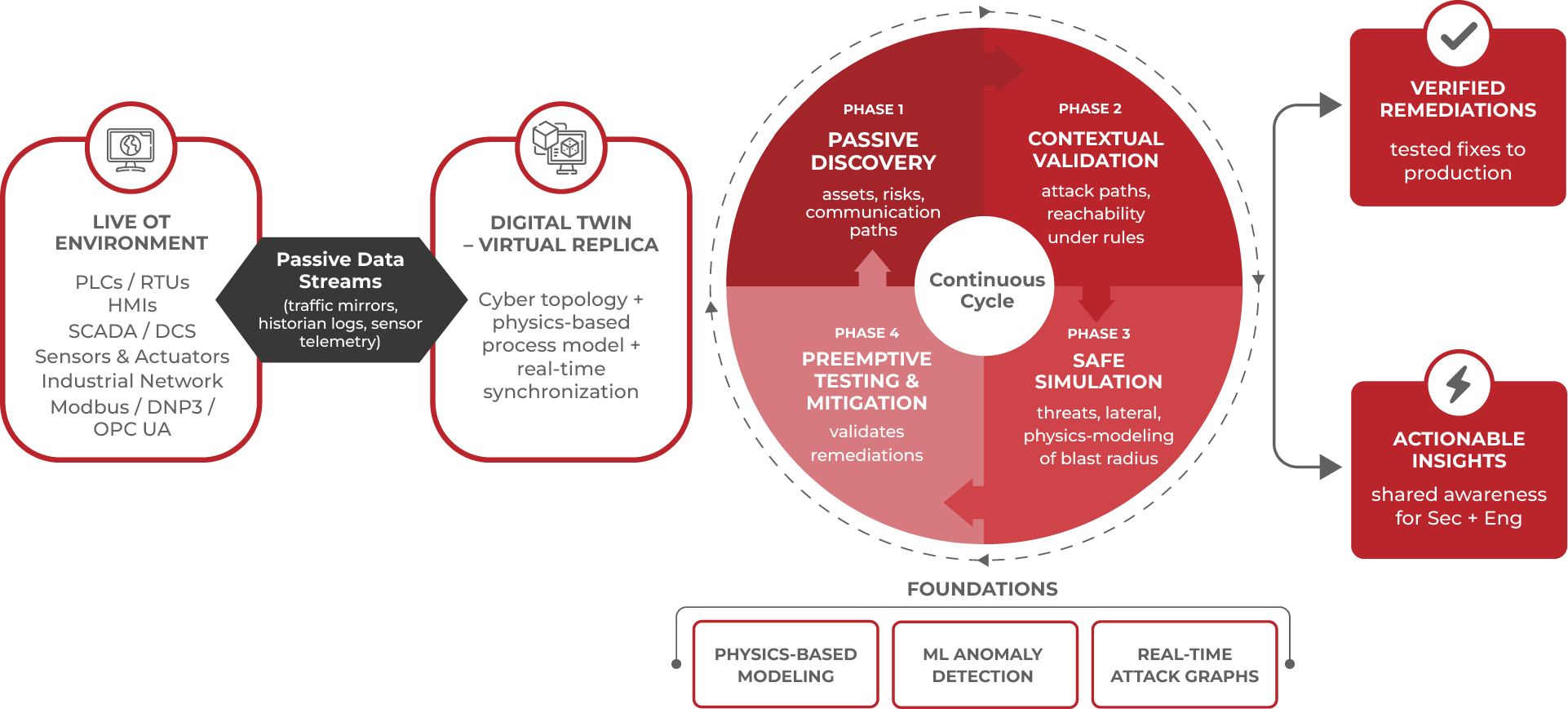

Digital twins fix this by making a very accurate virtual copy of the OT world. The twin gets its information from passive data streams like network traffic mirrors, sensor telemetry, historian logs, and asset inventories. It keeps everything in sync all the time without ever having to talk to real controllers. It captures not just cyber features like assets, topologies, and configurations, but also the physical dynamics of the process, such as flow rates, pressures, temperatures, and actuator reactions.

Digital Twins Power the Exposure Management Loop

The diagram below illustrates how digital twins enable a continuous and low-risk exposure management cycle for OT environments. By combining passive visibility, contextual validation, safe simulation, and preemptive mitigation, the digital twin helps organizations assess cyber-physical risk without disrupting live operations. This closed-loop approach allows teams to move from exposure identification to verified remediation while preserving safety, availability, and process integrity.

This makes a safe, repeating cycle that works for cyber-physical systems:

Passive Discovery

The twin keeps a living inventory of all assets, even old legacy devices that are long gone. It shows hidden risks that typical tools could overlook since they cannot scan aggressively, such as old firmware, network policies that are too open, or unexpected communication pathways.

Contextual Validation of Attack Paths

Inside the twin, dynamic attack graphs emerge, charting the moves of actual adversaries. For example, it simulates how a compromised engineering workstation could pivot, using observed traffic patterns, to reach a crucial PLC, triggering a chain of low-level faults along the way. Rather than relying on general severity rankings, validation checks actual reachability under the environment's specific constraints - firewall restrictions, protocol behaviors, and deterministic timing requirements.

Safe Simulation of Threats and Physical Impacts

In the isolated sandbox, teams run scenarios that take consequences into account:

The twin contains physics-based modeling, so simulations display real-world results like pressure spikes in a pipeline, turbine overspeed, or safety system triggers. This lets us accurately measure the blast radius in terms of safety, dependability, and operational continuity.

Preemptive Testing and Mitigation

The twin first tries out the proposed improvements, which could be stricter segmentation rules, policy changes, or making the configuration harder. Engineers say that a remedy shuts the approved path without interfering with valid control commands or causing excessive delays in safety loops. Only remediations that have been tested and shown to be low-risk go into production, usually under controlled orchestration.

This loop compresses the time from exposure detection to verified risk reduction while preserving the stringent availability demands of critical systems.

Technical Intricacies That Make It Effective

The strength derives from how well cyber and physical models work together. A high-fidelity twin could use time-series neural networks (like LSTM or TCN designs) that are limited by the rules of physics (such as mass balance and energy conservation) to guess what normal behavior would be. Residuals between expected and observed states are then sent to anomaly detection engines that can tell the difference between normal operations, single-stage attacks, and multi-stage intrusions. As the twin ingests live data, attack graphs change in real time, showing choke places where one well-placed control might cut off several possible avenues. Simulations let you practice complicated sequences, including supply chain compromises or insider-like actions, without having to touch real equipment. In research frameworks, these types of twins have shown great results in SCADA testbeds (such as the SWAT and WADI datasets), with high detection accuracy, few false positives, and some configurations with latencies of less than a second. They also work as upgrade paths parallel shadow instances let you test more resilient designs (alternative redundancy schemes or diversity) before a seamless cutover, which makes modernization less disruptive.